So there I was, staring at a $42,000 licensing renewal invoice for Oracle JDK at 8 AM on a Tuesday. We had exactly three weeks to migrate 40 microservices to OpenJDK before the new billing cycle hit. That was my February.

The 2026 pricing model changes forced our hand. I wasn’t going to justify that budget hit to finance for a runtime environment we could get elsewhere for free. We ripped out Oracle JDK 17 and standardized everything on Eclipse Temurin 21.0.2.

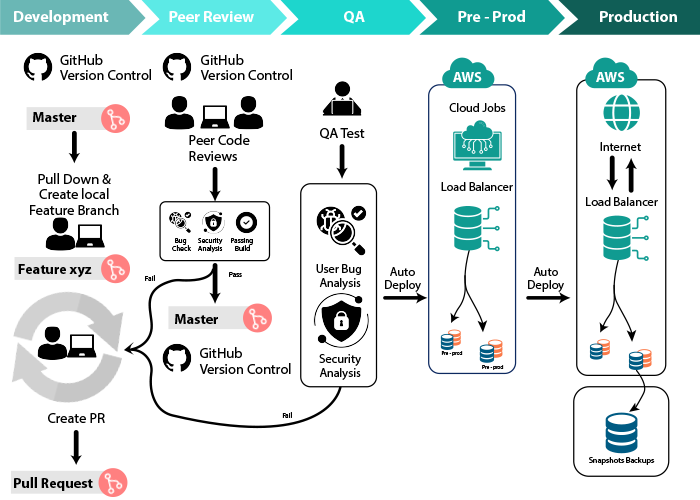

But swapping the JDK was just the start. The migration exposed massive, ugly bottlenecks in our DevOps pipeline that we had been ignoring for months.

The Hidden CI/CD Time Wasters

Our Maven builds were taking 14 minutes and 30 seconds on average. Unacceptable. When you’re pushing code 20 times a day, developers are spending hours just staring at Jenkins spinners.

I dug into the logs and found the culprit. It wasn’t the compiler. It was our new AI integration tests.

We recently added several Java-based AI features using LangChain4j. To test them properly without hitting OpenAI API rate limits in CI, we started spinning up local LLMs using Testcontainers. Every single build was pulling down a 4GB Ollama Docker image, booting it, running three tests, and tearing it down.

Here is a massive gotcha the documentation doesn’t mention. If you run Testcontainers with local LLMs on a standard GitHub Actions runner or an AWS t3.xlarge Jenkins worker, the default 60-second startup timeout will fail randomly about 30% of the time. The CPU gets throttled during the model load. You have to bump the timeout to at least 180 seconds and explicitly cache the Docker volume containing the model weights. I lost three days figuring that out.

Building a Smarter Pipeline Analyzer

I needed a way to automatically flag these slow, resource-heavy tests across all 40 repositories so we could isolate them into a nightly build instead of the per-commit pipeline. I wrote a quick Java utility to parse our CI logs and categorize the failures and bottlenecks.

First, I defined a simple contract for the analyzer.

public interface PipelineAnalyzer {

AnalysisResult analyze(Path logFile);

}

public record AnalysisResult(int errorCount, List<String> criticalErrors) {}Then I implemented the logic using the Streams API to chew through the massive log files efficiently. I didn’t want to load a 50MB text file entirely into memory.

import java.io.IOException;

import java.io.UncheckedIOException;

import java.nio.file.Files;

import java.nio.file.Path;

import java.util.List;

public class BuildLogAnalyzer implements PipelineAnalyzer {

@Override

public AnalysisResult analyze(Path logFile) {

try (var lines = Files.lines(logFile)) {

List<String> criticalErrors = lines

.filter(line -> line.contains("[ERROR]") || line.contains("TimeoutException"))

// Ignore the annoying SLF4J multiple bindings warning everyone has

.filter(line -> !line.contains("SLF4J: Class path contains multiple SLF4J bindings"))

.map(this::extractMeaningfulMessage)

.distinct()

.toList();

return new AnalysisResult(criticalErrors.size(), criticalErrors);

} catch (IOException e) {

throw new UncheckedIOException("Pipeline log parsing died", e);

}

}

private String extractMeaningfulMessage(String logLine) {

int errorIndex = logLine.indexOf("[ERROR]");

if (errorIndex != -1) {

return logLine.substring(errorIndex + 7).trim();

}

return logLine.trim();

}

}This script immediately highlighted that 80% of our pipeline timeouts were coming from just two AI prompt-routing tests. We moved those to a dedicated staging environment.

Using AI to Fix AI Tests

Since we already had the LLM infrastructure in our cluster, I decided to be a little petty. I wired up our log analyzer to feed those extracted errors directly into a lightweight local model. Instead of developers digging through Jenkins console output, the pipeline now drops a two-sentence summary of why the build failed directly into Slack.

The implementation is straightforward. I just stream the errors, limit them so I don’t blow up the context window, and fire it off.

import java.util.List;

import java.util.stream.Collectors;

public class FailureSummarizer {

private final AiClient aiClient;

public FailureSummarizer(AiClient aiClient) {

this.aiClient = aiClient;

}

public String summarizeBuildFailure(List<String> errors) {

if (errors.isEmpty()) {

return "Build passed. No errors found.";

}

String prompt = errors.stream()

.limit(15) // Hard cap to prevent context overflow

.collect(Collectors.joining(

"\n",

"You are a strict DevOps engineer. Summarize these Java CI errors in one short sentence:\n",

""

));

return aiClient.generateResponse(prompt);

}

}The Math Works Out

By migrating to Eclipse Temurin and fixing our Testcontainers caching strategy, we dropped our average build time from 14m 30s to 3m 15s. Memory usage on our Jenkins workers went down by 22% because we weren’t randomly hanging on orphaned Java processes from timed-out Oracle JDK containers.

We saved the $42,000 on licensing. We saved hundreds of developer hours a month by fixing the pipeline waste. And the tests actually pass on the first try now.

Audit your CI/CD logs. You probably have a massive container downloading every single run that nobody knows about.

Common questions

Why does Testcontainers fail when running local LLMs on GitHub Actions?

The default 60-second Testcontainers startup timeout fails roughly 30% of the time on standard GitHub Actions runners or AWS t3.xlarge Jenkins workers because the CPU gets throttled while the model loads. Bump the timeout to at least 180 seconds and explicitly cache the Docker volume containing the model weights. Without that volume cache, every build re-downloads the multi-gigabyte Ollama image, multiplying the failure rate.

How much can migrating from Oracle JDK to Eclipse Temurin cut Maven build times?

After moving 40 microservices from Oracle JDK 17 to Eclipse Temurin 21.0.2 and fixing Testcontainers caching, average Maven build time dropped from 14 minutes 30 seconds to 3 minutes 15 seconds. Jenkins worker memory usage also fell by 22% because builds stopped hanging on orphaned Java processes from timed-out Oracle JDK containers. The licensing migration also saved $42,000 on the 2026 renewal.

How do you parse large Jenkins log files in Java without loading them into memory?

Use the Streams API with Files.lines(Path) inside a try-with-resources block so each line is processed lazily instead of loading the full 50MB log into memory. Filter for [ERROR] or TimeoutException entries, drop noisy SLF4J multiple-bindings warnings, map each line to a meaningful message, then call distinct().toList() to deduplicate. Wrap IOException as UncheckedIOException so it propagates cleanly through the stream.

How can you automatically summarize Java CI build failures using a local LLM?

Pipe the extracted error lines from your log analyzer into a lightweight local model already running in your cluster. Stream the errors, hard-cap them with .limit(15) to avoid context-window overflow, and join them under a prompt instructing the model to act as a strict DevOps engineer summarizing Java CI errors in one short sentence. The response gets posted directly to Slack instead of forcing developers into Jenkins console output.